AI Fairness and Bias Testing: A Comprehensive Guide to Mitigating Adversarial Outcomes

Artificial intelligence (AI) has revolutionized the way we approach complex decision-making, with applications in diverse domains such as healthcare, finance, and human resources. However, the rapid development of AI systems has also raised concerns about fairness and bias in these models. Recent studies have shown that AI can perpetuate social biases and discriminatory practices, underlining the need for rigorous bias testing and fairness evaluation strategies. In this article, we will delve into the world of AI fairness and bias testing, exploring the most effective techniques and tools for mitigating adverse outcomes.

What is AI Fairness and Bias Testing?

AIFairnessandBiasTestingrefersto the systematic evaluation of AI models to detect and mitigate biases, ensuring that these systems produce fair and equitable outcomes. Bias in AI systems arises when models produce systematically unfair results for certain groups, often based on protected characteristics such as racial, ethnic, or socioeconomic status. The goal of AIFairnessandBiasTestingis to examine the decisions made by AI-driven systems and identify potential biases, enabling the development of more inclusive and equitable AI applications.

Types of Bias in AI Systems

- **Confirmation Bias**: The tendency of AI systems to reinforce existing biases and make judgments based on incomplete information.

- **Statistical Bias**: The disparity in accuracy or performance between AI models when tested on different sub-populations or groups.

- **Concept Drift Bias**: The differences in understanding and grouping of data elements that can lead to misinterpretation and biased decisions.

Why is AI Fairness and Bias Testing Important?

Detecting and mitigating biases in AI systems is crucial for building trust and ensuring fairness in decision-making processes. AI-driven systems have far-reaching consequences, affecting the lives of individuals and communities. Therefore, it is essential to employ rigorousfairnessmetrics and auditing techniques to identify potential biases and ensure that AI applications are transparent, accountable, and respectful of human values.

Tools and Techniques for AI Fairness and Bias Testing

- Audit Framework**: An iterative process of testing, evaluation, and refinement to ensure that AI systems operate fairly and without bias.

- Regular Monitoring**: Continuous testing and evaluation of AI models to detect and address any emerging biases or disparities.

- Intersectional Analysis**: Examining the intersections of different biases and how they impact specific groups or populations.

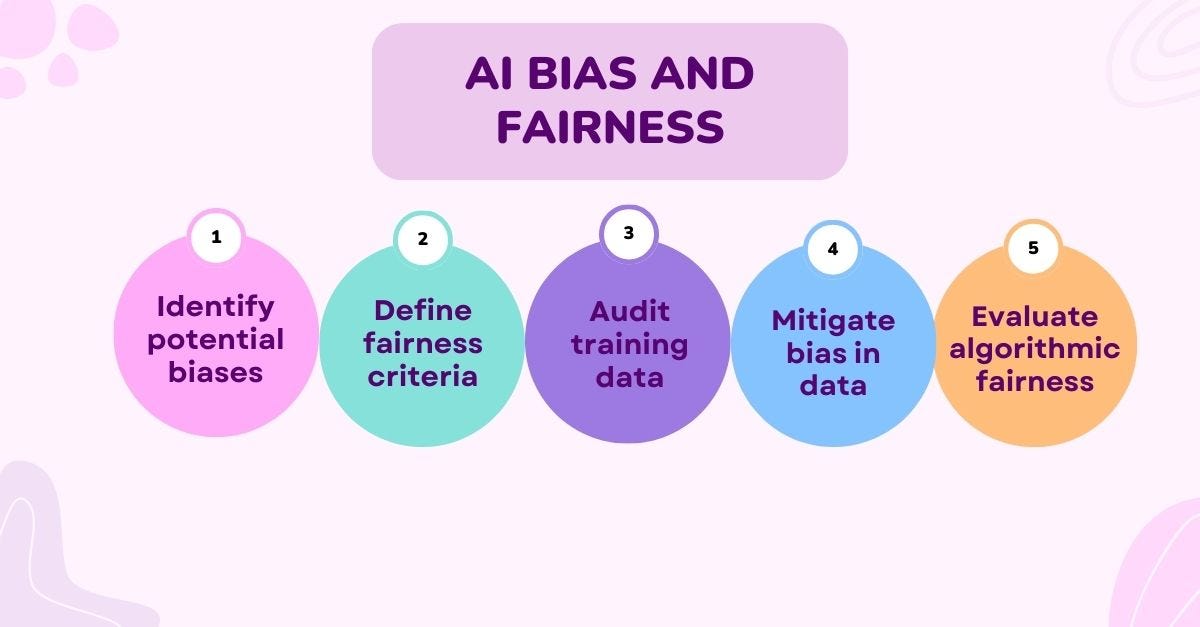

Strategies for Conducting AI Fairness and Bias Testing

Implementing effective AI fairness and bias testing strategies requires a multidisciplinary approach, incorporating insights from machine learning, data science, sociology, and ethics. Some best practices for conductingfairnessaudits and mitigatingbiasinAIsystems include:

- Develop Data-Inclusive Strategies**: Use diverse datasets, including those that capture minority perspectives and experiences, to improve AI model performance and fairness.

- Employ Fairness Metrics**: Regularly evaluate AI models using fairness metrics and audit frameworks to identify and address biases.

- Implement Human Oversight**: Incorporate human oversight and review processes to ensure that AI-driven decisions are transparent, explainable, and fair.

Conclusion

AI Fairness and Bias Testing is a critical step in ensuring that AI systems contribute positively to society, rather than perpetuating social biases and injustices. By exploring the most effective techniques and tools for mitigating biases and disparities in AI-driven applications, we can develop more equitable and transparent AI systems that respect human values and promote fairness in decision-making processes.