Unlocking the Power of Optional Foundation Tuning: A Guide to Customizing Your AI Models

In the ever-evolving landscape of artificial intelligence, one concept that has gained significant attention is the use of foundation models. These pre-trained models have demonstrated remarkable capabilities across a wide range of tasks, but their immense size poses significant challenges for fine-tuning. However, the concept of optional foundation tuning has emerged as a solution to this problem.

What is Optional Foundation Tuning?

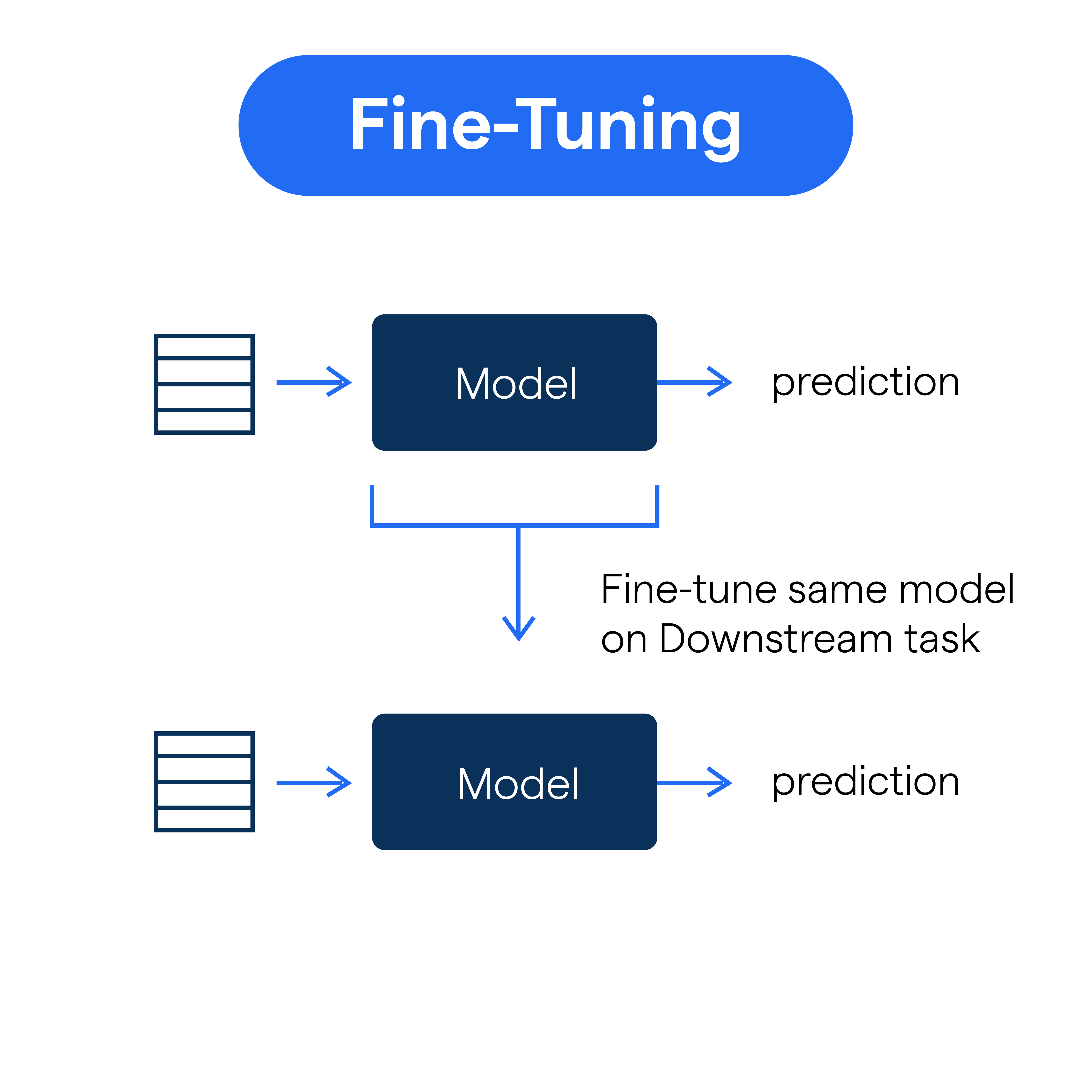

Optional foundation tuning is a method of training and customizing a model by adding custom weights using the optional parameter 'custom_weights_path' in the Foundation Model Fine-Tuning API. This approach allows developers to leverage the broad capabilities of foundation models while customizing them on their own small, task-specific data. The process of fine-tuning involves further training and changing the weights of the model, making it a valuable method for adapting the model to a specific domain or task.

Benefits of Optional Foundation Tuning

- Cost-Effectiveness**: Fine-tuning a pre-trained foundation model is an affordable way to customize a model, reducing the need for large computing resources.

- Flexibility**: Optional foundation tuning allows developers to experiment with different model configurations and fine-tune the model to achieve better results.

- Improved Performance**: Fine-tuning enables the model to specialize in a specific task, enhancing its performance and efficiency.

How to Perform Optional Foundation Tuning

Performing optional foundation tuning involves the following steps:

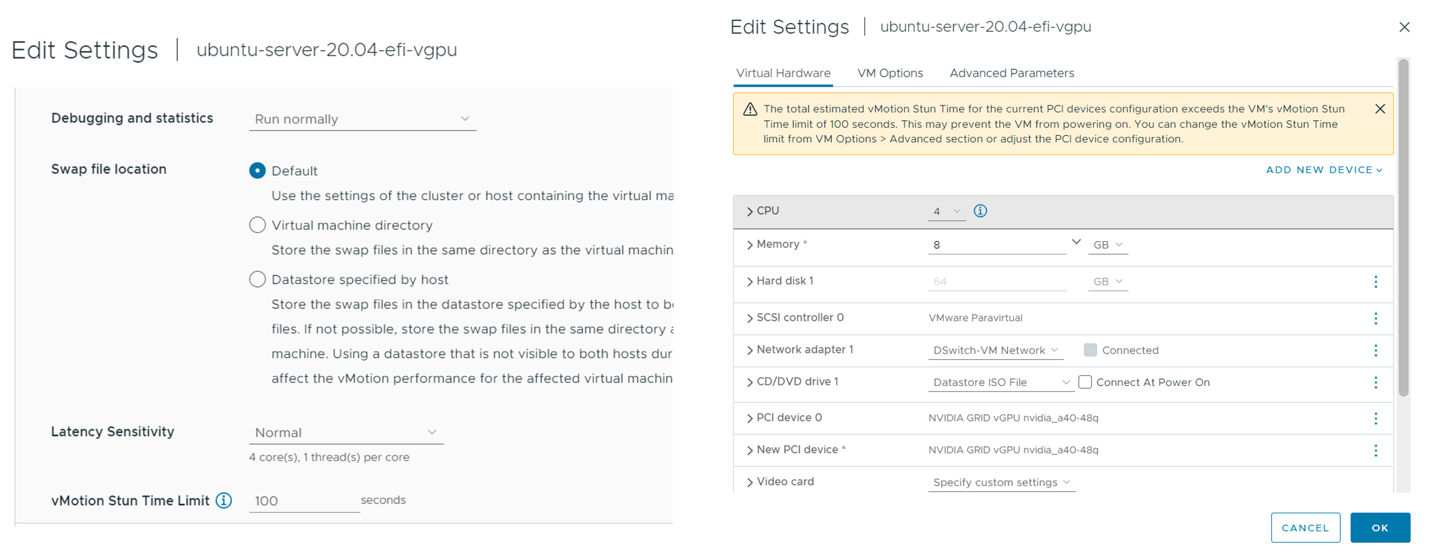

1. Set the 'custom_weights_path' parameter to the checkpoint path from a previous fine-tuning API training run.

2. Fine-tune the foundation model by further training it on a small, task-specific dataset.

3. Monitor the model's performance and adjust the training parameters as needed to achieve better results.

Restrictions and Limitations

Some restrictions and limitations of optional foundation tuning include:

- Computational Resources**: Fine-tuning a foundation model can require significant computational resources, especially for large datasets.

- Storage**: Fine-tuned models can be large and require substantial storage space.

- Model Size**: The size of the model can limit fine-tuning capabilities, especially for very large models.

Conclusion

- Optional foundation tuning is a valuable method for customizing pre-trained foundation models, enabling developers to adapt the model to a specific task or domain.

- The use of optional foundation tuning can improve the performance and efficiency of the model, making it an attractive option for many developers.

- By understanding the benefits and limitations of optional foundation tuning, developers can effectively leverage this method to achieve better results in their machine learning projects.

Overall, optional foundation tuning is a powerful tool for customizing AI models. By following the steps outlined in this article and understanding the benefits and limitations of this method, developers can unlock the full potential of pre-trained foundation models and achieve better results in their machine learning projects.

Also Note:

FoundationModel Fine-Tuning supports adding custom weights using the optional parameter custom_weights_path to train and customize a model. Checkpoint paths can be found in the Artifacts tab of a previous MLflow run.

Foundation models are computationally expensive and trained on a large, unlabeled corpus. Fine-tuning a pre-trained foundation model is an affordable way to take advantage of their broad capabilities while customizing a model on your own small, corpus.

Foundation models can be tuned in the following ways: Full fine-tuning, Low-rank adaptation (LoRA) fine-tuning, ...